Mission Learning Systems Expands FANUC Robotics and CNC Training Access for Industrial Employers

New workforce training solutions enable manufacturers to build FANUC robotics and CNC skills in-house.

AI is the topic du jour – and has been for several years now – for good reason. Large language models and chatbots have made “AI” feel suddenly accessible. But if you work anywhere near STEM, CTE, manufacturing, transportation, or industrial technology, did you realize:

Most AI that students will encounter in technical fields won’t be chatbots. It’ll be applied AI. Embedded intelligence inside real systems, systems that sense the world, make decisions, and take action. That intelligence is increasingly present in the tools technicians operate, the equipment engineers design, the infrastructure operators maintain.

That’s why the most important question for educators isn’t “Should I let my students use ChatGPT?” It’s, “How does applied AI actually work in the real world, and what does that mean for my classroom?”

Applied AI is the use of AI and machine learning to improve physical processes and systems, typically by enabling them to sense, decide, and act with increasing accuracy over time. It’s the difference between an AI limited to digital interactions (ChatGPT) and an AI system that can detect a defect, optimize a route, predict a failure, or personalize an experience in real time on a physical system (self-driving cars, autonomous mobile robots, smart manufacturing systems, etc.).

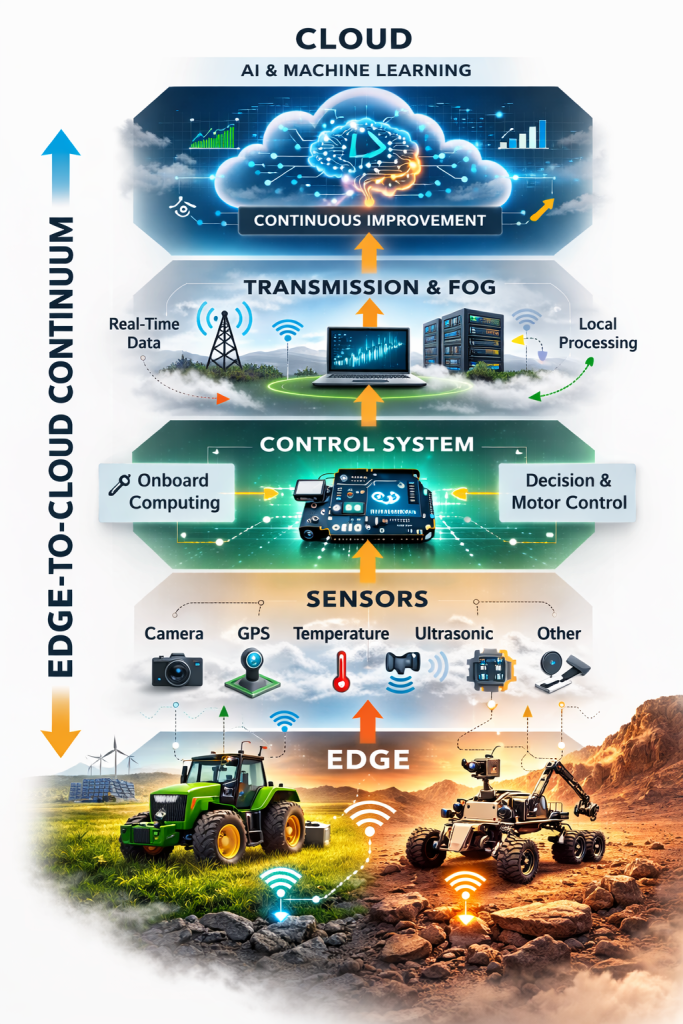

To understand applied AI, you need one foundational concept: the Edge-to-Cloud Continuum.

The Edge-to-Cloud (E2C) Continuum is the full pathway that connects the physical world to intelligence in the cloud: how data is gathered on devices, transmitted to the Cloud, analyzed and deployed in AI/ML models, and sent back to the device for improved performance.

In our framework, the E2C Continuum is easiest to understand as stacking, connected layers. Each layer has a distinct job, and together they explain how applied AI systems operate in the real world.

This is the physical device the AI system exists to support: an iPhone, a vehicle, a production line, a robot, a tractor.

Sensors are how the physical world becomes data. In industrial settings, that can mean vibration, temperature, pressure, torque, current, vision, proximity or RFID sensors on production equipment. In consumer technology like your iPhone, it can be the 15 sensors in your phone measuring light, pressure, direction, speed, etc., or ways you interact with your apps (every tap, click and swipe is measured as an input signal and captured as a data point).

Sensors do two critical things:

A control system is the brain of the Edge device that aggregates the sensor data for immediate action and distribution to the Cloud. It might be a PLC controlling a production line, a robot controller adjusting motion, or the iOS on your iPhone that controls Siri.

In some situations, minor AI and Machine Learning are taking place here at the Control system. Where speed, latency and bandwidth become an issue (like an autonomous vehicle being able to stop at a split-second notice), AI is embedded at the control level, often housed on the Edge device itself.

For much larger-scale data processing and Machine Learning operations, data is transferred through the rest of the E2C Continuum.

Transmission is the connectivity layer that moves information between the Edge and the rest of the system: Wi-Fi, cellular, industrial networks, ethernet, protocols, routing, and all the practical realities of latency, bandwidth, intermittent connectivity, and security.

Even though “transmission” is more of a transitional step than a stopping-point in the continuum, it’s still important to understand how data is transmitted. A system has to function when networks are congested, when bandwidth is expensive, when data volumes are high, and when response times must be predictable.

Fog computing (sometimes called edge gateways, local servers, or regional compute) sits between the Edge device and the Cloud. This is where data can be stored temporarily for faster retrieval and other key actions including:

Fog often helps smooth data transmission and shorten latency by providing the user access to data centers that are more local to their Edge device, rather than waiting for the raw data to flow all the way to the central Cloud and back again.

This is where the magic happens. The Cloud is the centralized brain center of the entire E2C Continuum, where data is gathered (often from many users of the same application) in one place, where AI and ML train models, run experiments, analyze massive datasets, monitor system performance, and coordinate improvements across many users, devices and locations.

At times, users can use “on prem” Cloud servers where the Fog phase is skipped, the data never leaves the physical site, and AI/ML applications are run only on the user’s data. This is often the case for businesses with protected IP, like manufacturers.

Otherwise, common applications like Spotify gather billions of datapoints from millions of users for AI/ML analysis in the Cloud to help improve Spotify’s recommendation engines and user experience.

Let’s take a closer look at the Edge-to-Cloud Continuum through the lens of Spotify.

Spotify is a useful lens because it’s familiar, but it also reflects how many applied AI systems work in practice.

How does Spotify seem to know what you want to hear next, even when it’s a different genre, a new artist, or a song you’ve never heard before? Sure, it’s a “recommendation engine” – but what does that even mean? It means it’s the Edge-to-Cloud Continuum and applied AI working together as a full system.

When you open Spotify on your phone and press play, the Edge device is your phone. The app itself is the environment you’re interacting with, and it immediately starts generating data through your actions: play, skip, replay, search, like, playlist creation, session length, and even patterns like what time of day you listen.

Those interactions are the Sensor layer in action. In Spotify’s case, the “sensors” aren’t just physical sensors in your phone, they also include your behavioral signals inside the app. Every tap, swipe, and click becomes a data point that helps Spotify understand your preferences and listening habits.

The Control system is the app logic on your device that turns those signals into immediate actions. When you tap play, Spotify starts playback. When you skip a track, it responds instantly. This is where the user experience has to feel seamless and immediate. Some decisions and processing happen locally so the app feels responsive, even before deeper AI/ML processing happens elsewhere in the continuum.

From there, the data moves through Transmission: Wi-Fi, cellular, and internet infrastructure that carries your listening activity and content requests through the network. This layer matters because Spotify has to deliver a consistent experience even under real-world conditions like variable bandwidth, congestion, or changing signal strength.

Before everything reaches the central Cloud environment, Spotify also relies on Fog-layer infrastructure (nearby servers, caching systems, and regional delivery networks). This is what helps songs load quickly and stream smoothly without constant buffering. Fog systems reduce latency by storing and serving content closer to the user, which is especially important when millions of people are streaming at the same time.

Finally, the Cloud is where Spotify’s large-scale AI and machine learning operations happen. This is where data from millions of users is aggregated and analyzed, where recommendation models are trained and refined, and where Spotify learns broader patterns across users, genres, behaviors, and contexts. The cloud doesn’t just help Spotify respond in the moment, it helps Spotify’s recommendation engine improve over time.

That’s what makes Spotify such a strong example of applied AI. It’s a full edge-to-cloud system that continuously captures signals, delivers real-time experiences, and uses cloud-scale AI/ML to improve what happens next.

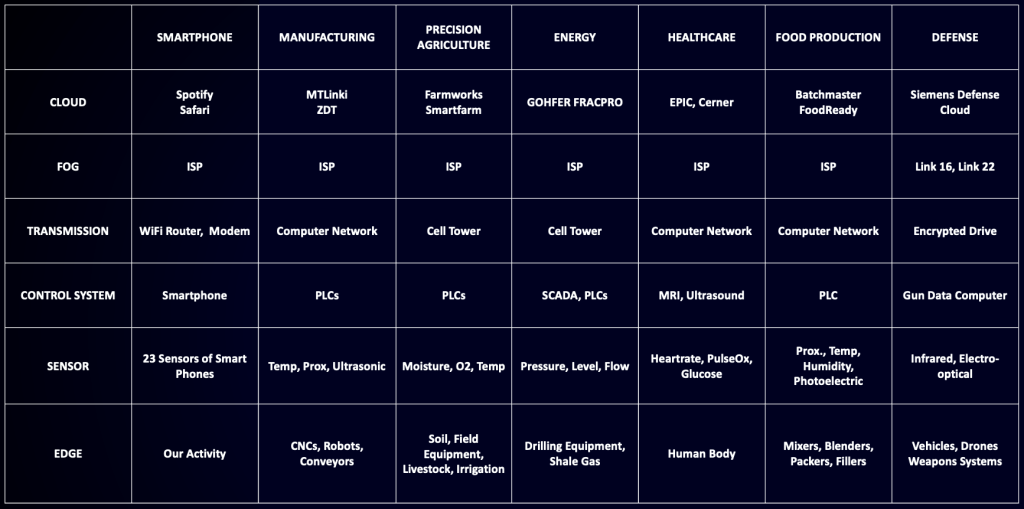

Once you have the E2C lens, it’s easy to translate beyond consumer apps into the industries where applied AI is becoming foundational.

Nearly every industry, from manufacturing to transportation to healthcare, follows this E2C Continuum infrastructure. Once you understand how it works in one area, you can translate it to other fields:

For professionals and learners in STEM and CTE pathways, the most durable AI literacy won’t come from treating AI as a single tool. It comes from understanding systems; how data becomes decisions, how decisions become actions, and how those actions improve over time across real constraints.

That’s exactly what Discover AI is designed to teach: not “how to get better at using ChatGPT” but how to truly understand embedded, applied AI across the industries and roles where it’s already changing what “competent” looks like.

If you want to understand applied AI in a way that translates beyond chatbots, and into the systems shaping modern work, the edge-to-cloud continuum is the right starting point.

New workforce training solutions enable manufacturers to build FANUC robotics and CNC skills in-house.

Industrial robots are now a baseline technology across the modern economy. Yes, they power manufacturing, but they’re also common in

How should education prepare our students for a world beyond AI chatbots, where embedded AIimpacts every career field? Where real